|

9/10/2023 0 Comments Cache coherence problems

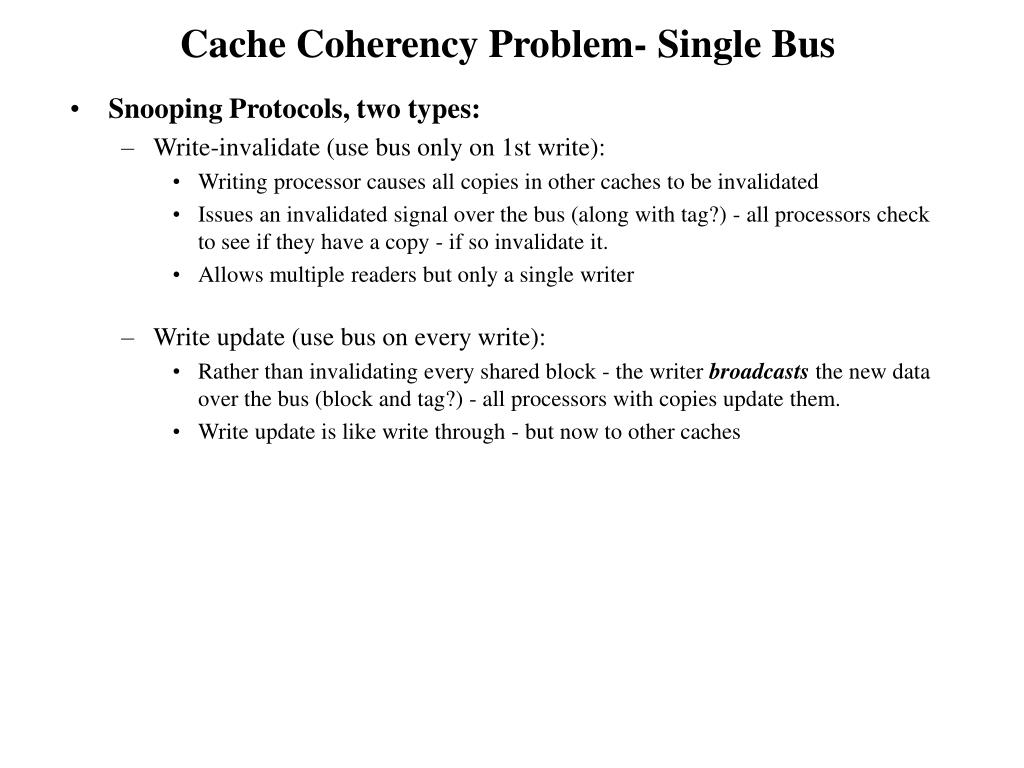

Why do we bother with caches in the first place? The principal advantage of an enterprise cache is the speed and efficiency at which data can be accessed. In fact, one of the greatest challenges in managing the operation of an enterprise database is maintaining cache consistency. If you believe Ralph Waldo Emerson, a foolish consistency may be the hobgoblin of little minds, but when it comes to implementing a scalable, successful enterprise-level caching strategy, there’s nothing foolish about consistency. A primer you need to understand what application caching is, why and when it’s needed, and how to get the best performance from your applications. All processors snoop the requests and respond appropriately.Download Caching at Scale e-book. In the snoopy protocol, transaction requests (to read, write or upgrade) are sent to all processors. Generally, early systems used directory-based protocols, where the directory would track the data being shared and the sharers. Protocols can also be classified as snoopy or directory-based. It can be tailored to the target system or application. The protocol must fulfill the basic requirements of coherence. The goal is that two clients must not see different values of the same shared data. The coherence protocol applies cache coherence in a multi-processor system. Therefore, many larger systems (>64 processors) use this type of cache coherence. On the other hand, directories tend to have longer latencies (3-hop request/forward/response), but use much less bandwidth because the messages are point to point rather than broadcast. Each request must be broadcast to all nodes in the system, which means that as the system becomes larger, the size of the bus (logical or physical) and the bandwidth it provides must continue to increase. The disadvantage is that snooping cannot be extended. If there is enough bandwidth, snooping based protocols tend to be faster, because all transactions are requests/responses seen by all processors. Each mechanism has its own advantages and disadvantages. The two most common mechanisms for ensuring consistency are snooping and directory-based. Related post: An Introduction to Hard Drive Cache: Definition and Importance Cache Coherence Mechanisms However, they are insufficient because they do not meet the Transaction Serialization conditions. The above conditions meet the Write Propagation criteria required for cache coherence. If processor P1 reads the old value of X, even after P2 is written, it can be said that the memory is incoherent. Propagating writes operations to shared memory locations ensures that all caches have a coherent view of memory. This condition defines the concept of a coherent view of memory. Therefore, X must always return the value written by P2. After processor P1 reads location X and another processor P2 writes X, there are no other writes to processor X between the two accesses, and the read and write must be sufficiently separated.When the processor P reads the location X after the write of X by the same processor P, when the write of X by another processor does not occur between the write and the read instruction by P, X must always return the value written by P.To achieve cache coherence, the following conditions must be met: In a multi-processor system, consider that more than one processor has cached a copy of memory location X. A kind of data that appears in different caches at the same time is called cache coherence, which is called global memory in some systems. DefinitionĬoherence defines the behavior of reads and writes to a single address location. However, in practice, it is usually executed at the granularity of cache blocks. In theory, cache coherence can be enforced at load/store granularity. Transaction Serialization: All processors must see the read/write to a single memory location in the same order.Write Propagation: Any data changes in the cache must be propagated to other copies (the copy of the cache line) in the peer caches.The following are the requirements for cache coherence: Cache coherence is to ensure that changes in shared operands (data) values are propagated in the entire system in time. When one copy of the data is changed, the other copies must reflect the change. In a shared-memory multiprocessor system, each processor has a separate cache memory, and there can be many copies of shared data: one in main memory, and one in the local cache of each processor that requested it. Tip: If you want to fix high CPU usage caused by various reasons, then it is recommended to go to the MiniTool website.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed